Quick Navigation — Jump to any topic. Scan before interviews.

| Deep Learning | NLP & LLMs | Dimensionality Reduction |

|---|---|---|

| MLP | Transformer Architecture | Covariance & Correlation |

| Activation Functions | Tokenization | PCA |

| Deep Neural Networks | Temperature & Sampling | LDA |

| CNN | Context Engineering | SVD |

| RNN | NLP Feature Extraction | |

| CNN vs RNN | LLM Hallucination | |

| Graph Neural Networks | Prompt Engineering Techniques |

| LLM Training & Fine-Tuning |

|---|

| Prompt Engineering vs Fine-tuning |

| LoRA / PEFT |

| Fine-tuning Decision Framework |

| Continued Pretraining |

| RLHF & Preference Optimization |

| Transformer Memory Optimization |

Types of Machine Learning¶

One-liner: ML is categorized by how the model learns from data — with labels (supervised), without labels (unsupervised), from self-generated targets (self-supervised), with partial labels (semi-supervised), or through trial and reward (reinforcement learning).

| Type | Data | Goal | Examples |

|---|---|---|---|

| Supervised | X + labels y | Learn f(X) → y | Classification, Regression |

| Unsupervised | X only | Find structure | Clustering, PCA, Anomaly detection |

| Self-Supervised | X only (targets from X) | Learn representations | GPT pretraining, BERT MLM, CLIP |

| Semi-Supervised | Small labeled + large unlabeled | Boost performance with limited labels | Pseudo-labeling, FixMatch |

| Reinforcement Learning | States + actions + rewards | Maximize cumulative reward | Games, robotics, RLHF |

Quick Decision Guide: - Supervised: You have reliable labels and want best task performance - Unsupervised: You want structure/segmentation/embeddings without labels - Self-supervised: You want a strong base model from lots of raw data - Semi-supervised: Labels are scarce but you have lots of unlabeled in-domain data - RL: Sequential decisions with (delayed) rewards

[!WARNING] Common confusion: Self-supervised ≠ Unsupervised! - Self-supervised creates targets FROM the data itself (e.g., next token prediction) - Unsupervised finds structure without any targets (e.g., clustering)

LLM Lifecycle (Interview Framing): 1. Pretraining (Self-supervised): Learn language patterns from raw text 2. SFT - Supervised Fine-Tuning: Instruction tuning on (prompt, response) pairs 3. Preference Tuning (RLHF/DPO): Optimize for human-preferred outputs 4. Deployment: RAG, tool use, guardrails, monitoring

📖 See also: RLHF & Preference Optimization for detailed coverage of stage 3.

Interview Answer Template:

"Machine learning has 5 main paradigms. Supervised learning uses labeled data to predict outputs—classification or regression. Unsupervised learning finds patterns without labels, like clustering. Self-supervised learning, used in LLM pretraining, creates targets from the data itself—GPT predicts the next token, BERT predicts masked tokens. Semi-supervised combines small labeled data with large unlabeled data using techniques like pseudo-labeling. Reinforcement learning learns through trial and reward, used in games and RLHF for aligning LLMs. Modern LLMs typically combine self-supervised pretraining, supervised fine-tuning, and RLHF alignment."

Detailed Explanation¶

Supervised Learning (X, y labeled)¶

Definition: Learn a function f(X) → y from labeled examples.

When to use: Historical labeled data with clear target. Typical business DS: churn, fraud, pricing, forecasting.

Common algorithms: - Linear/Logistic Regression, Naive Bayes - Trees, Random Forest - Gradient Boosting: XGBoost/LightGBM/CatBoost - SVM, kNN - Neural Nets (when trained on labeled data)

Pros: Clear objective; evaluation straightforward; best performance with quality labels.

Cons: Label cost/quality issues, leakage, distribution shift, class imbalance.

LLM tie-in: SFT (Supervised Fine-Tuning) / instruction tuning uses labeled (prompt, response) pairs.

Unsupervised Learning (only X)¶

Definition: Find structure in data without explicit labels.

When to use: No labels, exploratory analysis, segmentation, compression, anomaly discovery.

Common methods: - Clustering: k-means, GMM, hierarchical, DBSCAN - Dimensionality reduction: PCA, UMAP, t-SNE - Anomaly detection: Isolation Forest, One-Class SVM - Generative: Autoencoders, VAEs, GANs

Pros: Works without labels; great for discovery and feature learning.

Cons: Evaluation is tricky (no "ground truth"); depends on scaling, distance metric.

LLM tie-in: Used for embeddings + clustering (topic discovery) and retrieval workflows.

Self-Supervised Learning (targets derived from X)¶

Definition: Use unlabeled data but create supervised-style objectives from the data itself.

When to use: Lots of raw data, few labels; foundation model training.

Canonical objectives: - Language: Causal LM (GPT - next token), Masked LM (BERT), Seq2seq denoising (T5) - Vision/Multimodal: Contrastive (CLIP, SimCLR), Masked autoencoding (MAE)

Pros: Scales with data; produces general-purpose representations.

Cons: Compute/data hungry; may encode biases from web-scale data.

LLM tie-in: This is how base LLMs are trained (the "P" in "pretrained").

Semi-Supervised Learning (small labeled + large unlabeled)¶

Definition: Train using both labeled and unlabeled data for the same task.

When to use: Labels are expensive; unlabeled data is abundant; domain is stable.

Common methods: Pseudo-labeling, consistency regularization (FixMatch, MixMatch), label propagation.

Pros: Boosts accuracy with limited labels.

Cons: Confirmation bias from wrong pseudo-labels; sensitive to distribution mismatch.

Reinforcement Learning (interaction + reward)¶

Definition: Agent learns a policy to maximize expected cumulative reward.

When to use: Sequential decisions with delayed consequences—robotics, games, recommendations.

Main families: - Value-based: Q-learning, DQN - Policy-based: REINFORCE - Actor-Critic: A2C/A3C, PPO, SAC - Model-based: Dyna, MCTS, AlphaZero

Pros: Handles sequential, delayed rewards.

Cons: Sample inefficient; unstable training; reward design is hard.

LLM tie-in: RLHF optimizes LLM behavior using a reward model trained from human preferences (often PPO). DPO optimizes directly from preference pairs without explicit RL loop.

Interview Q&A (High-frequency)¶

Q: Supervised vs Unsupervised vs Self-Supervised? - Supervised: learns from human-provided labels y - Unsupervised: no labels, discover structure - Self-supervised: no human labels, but creates targets from data itself

Q: Where do LLMs fit? - Base/pretrained LLM: Self-supervised (pretraining) - Instruction tuning (SFT): Supervised - Alignment: RLHF or DPO

Q: RLHF vs SFT vs DPO?

📖 Deep dive: See RLHF & Preference Optimization for detailed comparison of RLHF, DPO, GRPO, and when to use each.

Q: When should you NOT use RL? - If you can frame it as supervised learning - If exploration is costly/unsafe - If reward is poorly defined (reward hacking risk)

Loss Functions¶

One-liner: Functions that measure prediction error: MSE for regression, cross-entropy for classification.

Loss functions measure how wrong our model's predictions are. They are used during training to guide optimization (gradient descent).

Loss Functions by Model Type¶

| Model | Task | Loss Function | Optimization |

|---|---|---|---|

| Logistic Regression | Classification | Cross-Entropy (Log Loss) | Gradient Descent |

| Neural Network (clf) | Classification | Cross-Entropy | Backprop + Gradient Descent |

| Linear Regression | Regression | MSE | Gradient Descent or Closed-form |

| Neural Network (reg) | Regression | MSE or MAE | Backprop + Gradient Descent |

| Decision Tree | Both | N/A (Gini/Entropy/MSE for splits) | Greedy splitting (no GD) |

| Gradient Boosting | Both | Cross-Entropy (clf) / MSE (reg) | GD on loss, trees fit residuals |

Key distinction: Decision Trees don't use gradient descent - they use greedy recursive splitting. Gradient Boosting uses gradient descent on the loss function, but individual trees still split using impurity measures.

Common Loss Functions¶

For Regression: | Loss | Formula | When to Use | |------|---------|-------------| | MSE | \(\frac{1}{n}\sum(y - \hat{y})^2\) | Default choice, penalizes large errors heavily | | MAE | \(\frac{1}{n}\sum\lvert y - \hat{y} \rvert\) | More robust to outliers | | Huber | MSE when error small, MAE when large | Balance between MSE and MAE |

For Classification: | Loss | Formula | When to Use | |------|---------|-------------| | Cross-Entropy (Log Loss) | \(-\sum[y\log(\hat{p}) + (1-y)\log(1-\hat{p})]\) | Binary/multi-class classification | | Hinge Loss | \(\max(0, 1 - y \cdot \hat{y})\) | SVM, margin-based classification | | Focal Loss | \(-\alpha(1-\hat{p})^\gamma \log(\hat{p})\) | Imbalanced classification |

Cross-Entropy vs Binary Cross-Entropy¶

In binary classification, cross-entropy and binary cross-entropy are mathematically equivalent.

Binary Cross-Entropy (single sample with \(y \in \{0, 1\}\) and predicted probability \(p\)): $\(\text{BCE} = -[y \log(p) + (1-y) \log(1-p)]\)$

General Cross-Entropy (for \(K\) classes): $\(\text{CE} = -\sum_{k=1}^{K} q_k \log(p_k)\)$

When \(K=2\), the general formula reduces to BCE since \(q_0 = 1 - q_1\) and \(p_0 = 1 - p_1\).

Practical difference in frameworks (PyTorch/TensorFlow):

| Function | Expected Output | Use Case |

|---|---|---|

BinaryCrossEntropy |

Single sigmoid output (1 neuron) | Binary classification |

CategoricalCrossEntropy |

Softmax outputs (K neurons) | Multi-class classification |

For binary classification, using 2 softmax outputs vs 1 sigmoid output gives mathematically equivalent loss values—they're just different parameterizations.

Loss Functions vs Evaluation Metrics¶

| Aspect | Loss Functions | Evaluation Metrics |

|---|---|---|

| Purpose | Guide optimization during training | Measure final model performance |

| Requirements | Must be differentiable (for GD) | Can be non-differentiable |

| Examples | Cross-Entropy, MSE | Accuracy, F1, AUC-ROC |

Note: Sometimes we optimize one loss but evaluate with a different metric. E.g., train with Cross-Entropy but report F1-score.

Linear Regression¶

One-liner: Linear regression models the relationship between input features (\(X\)) and output (\(y\)) using a linear equation, fitting by minimizing the sum of squared errors (SSE).

📖 See also: Loss Functions for detailed coverage of MSE and other loss functions.

| Term | Symbol | Description |

|---|---|---|

| Input features | \(X\) | Independent variables |

| Output | \(y\) | Dependent variable (target) |

| Weights | \(W\) | Parameters learned during training |

| Prediction | \(\hat{y} = Xw\) | Model output |

| Regularization | \(\alpha\) | Controls model complexity |

L1 vs L2 Regularization (Lasso vs Ridge)¶

One-liner: Both add a penalty term to prevent overfitting; L1 (Lasso) uses absolute values and can zero out weights; L2 (Ridge) uses squared values and shrinks weights toward zero.

| Aspect | L1 (Lasso) | L2 (Ridge) |

|---|---|---|

| Penalty term | \(\alpha \sum \|w_i\|\) | \(\alpha \sum w_i^2\) |

| Effect on weights | Shrinks some to exactly zero | Shrinks all toward zero (never exactly) |

| Feature selection | ✅ Yes (sparse models) | ❌ No (keeps all features) |

| Geometric shape | Diamond (corners on axes) | Circle (smooth, no corners) |

| Use when | Many irrelevant features expected | All features contribute somewhat |

| Interpretability | Better (fewer features) | Lower (all features remain) |

Why L1 Produces Zeros but L2 Doesn't (Geometric Intuition)¶

The key insight is the shape of the constraint region:

| L1 (Diamond) | L2 (Circle) |

|---|---|

| Has corners on the axes | Smooth everywhere |

| Loss contours often hit a corner | Loss contours hit at a tangent point |

| Corners are where some \(w_i = 0\) | Tangent point rarely has \(w_i = 0\) exactly |

Visual: Imagine elliptical contour lines (your loss function) expanding from the OLS solution. The first place they touch the constraint region is your regularized solution: - L1's diamond → likely hits a corner (sparse solution) - L2's circle → hits a smooth edge (dense solution)

[!TIP] Memory trick: L1 = 1-norm = sum of |weights| = Lasso = Less features (zeros out weights)

Interview Answer Template¶

"What's the difference between L1 and L2 regularization?"

"Both L1 (Lasso) and L2 (Ridge) add a penalty term to the loss function to prevent overfitting by keeping weights small.

L2 adds the sum of squared weights — this shrinks all weights toward zero but never exactly to zero.

L1 adds the sum of absolute weights — this can push some weights to exactly zero, effectively doing feature selection.

I'd use L2 when I believe all features contribute and just want to prevent large weights. I'd use L1 when I suspect many features are irrelevant and want automatic feature selection. You can also combine them with Elastic Net."

Elastic Net (L1 + L2 Combined)¶

\(J(w) = |Xw - y|^2 + \alpha \left( \rho |w| + (1-\rho) |w|^2 \right)\)

| \(\rho\) value | Result |

|---|---|

| \(\rho = 1\) | Pure L1 (Lasso) |

| \(\rho = 0\) | Pure L2 (Ridge) |

| \(0 < \rho < 1\) | Mix of both |

Use Elastic Net when: - Features are correlated (Lasso picks one arbitrarily; Elastic Net keeps related features together) - You want sparsity (L1) with stability (L2) - Unsure which is better — let cross-validation find optimal \(\rho\)

[!NOTE] In

sklearn.linear_model.ElasticNet, the mixing parameter is calledl1_ratio(same as \(\rho\) above).

3 Different Linear Models¶

Cost Functions¶

-

Linear Regression (a.k.a. ordinary least squares (OLS))

- \(J(w)\) = \(min_{w}|Xw-y|^{2}\)

-

Ridge Regression (L2 regularization)

- \(J(w)\) = $min_{w}|Xw-y|^{2} + \alpha |w|^{2} $

-

Lasso (L1 regularization)

- \(J(w)\) = $min_{w}|Xw-y|^{2} + \alpha |w| $

Advantages and Limitations¶

- Linear Regression

- Linear regression has no parameters to control/tune which makes it easy to use, but it also has no way to control model complexity.

- For multi dimensional dataset, linear models can be very powerful.

- Ridge Regression

- Addresses some of the problems of standard Linear Regression (Ordinary Least Squares) by imposing a penalty on the size of the coefficients, thereby keeping the magnitude of coefficients \(w\) as small as possible, while still predicting well.

- Penalises the euclidean distance of \(w\) (i.e. \(\alpha |w|^{2}\)).

- The Ridge model makes a trade-off between the model simplicity (near-zero \(w\) coefficients) and its performance on training set, by tuning regularization parameter \(\alpha\).

- If \(\alpha\) is too small (close to 0), we end up with Linear Regression model.

- \(J(w) = min_{w}|Xw-y|^{2} + \alpha |w|^{2} = min_{w}|Xw-y|^{2} + 0*|w|^{2} = min_{w}|Xw-y|^{2}\).

- With enough training data, regularization becomes less important as Linear regression catches up with ridge as with more data, it gets harder for model to overfit.

- If we have fewer features, ridge regression is the first choice.

- Lasso Regression

- An alternative to ridge to restrict the magnitude of coefficients, but in a slightly different way.

- Penalises sum of absolute values of \(w\) (i.e. \(\alpha |w|\)).

- Consequence of using L1 regularization: Some coefficients are exactly zero => This can be seens as a automatic feature selection => Makes the model easier to interpret as it selects only subset of features.

- If we have large number of features and we expect only few of them to be important, Lasso is better choice.

Interpreting Coefficients¶

- For example, \(\hat{y} = w_{1}x_{1}+w_{0} = 0.5x_{1} + 0.25\), we can interpret that 1 unit increase in \(x_{1}\) gives us \(w_{1}=0.5\) increase in \(\hat{y}\).

📖 See also: Why Does the OLS Slope Use Covariance? — explains why \(\beta = \frac{Cov(X,Y)}{Var(X)}\) and connects to correlation.

Breaking down "Ordinary Least Squares" (OLS)¶

- Squares → We square each residual: \((y_i - \hat{y}_i)^2\)

- Least → We find the line that minimizes the sum of these squared residuals

- Ordinary → Distinguishes it from other variants (like Weighted Least Squares or Generalized Least Squares)

The objective function

OLS finds coefficients \(\beta\) that minimize:

Why square the errors? 1. Removes sign — Without squaring, positive and negative errors would cancel out (a point +5 above the line and one -5 below would sum to 0, looking "perfect") 2. Penalizes large errors more — An error of 10 contributes 100, while an error of 2 contributes only 4. This pushes the line toward reducing big misses. 3. Mathematically convenient — Squared functions are differentiable everywhere, making it easy to find the minimum using calculus (set derivative to zero, solve for \(\beta\)) 4. Connects to maximum likelihood — If errors are normally distributed, minimizing squared errors is equivalent to maximizing the likelihood of observing your data

[!TODO] Revisit this point 4 later

Assumptions of Linear Regression¶

- Input and output has linear relationship

- Linear regression fits a straight line (or hyperplane) to your data. If the true relationship is curved or non-linear, your model will systematically miss patterns and make poor predictions. The model literally cannot capture non-linear relationships—it's constrained by its functional form.

- Variables are normally distributed

- More precisely, the residuals (errors, \(e_i = y_i - \hat{y}_i\)) should be normally distributed. This matters because:

- Statistical inference (confidence intervals, p-values, hypothesis tests) relies on normality assumptions

- If residuals aren't normal, your coefficient estimates are still valid, but your significance tests and confidence intervals become unreliable

- More precisely, the residuals (errors, \(e_i = y_i - \hat{y}_i\)) should be normally distributed. This matters because:

- Little to no collinearity between our features

- When features are highly correlated with each other:

- The model can't distinguish which feature is actually responsible for the effect

- Coefficient estimates become unstable (small data changes → large coefficient swings)

- Standard errors inflate, making it hard to determine statistical significance

- The model still predicts fine, but interpretation of individual coefficients becomes meaningless

- When features are highly correlated with each other:

- No autocorrelation or time dependance in our dependant variable

- Autocorrelation means errors at one time point are correlated with errors at another (common in time series). When present:

- Standard errors are underestimated

- You get falsely confident p-values and confidence intervals

- The model appears more precise than it actually is

- OLS is no longer the best linear unbiased estimator (BLUE)

- Autocorrelation means errors at one time point are correlated with errors at another (common in time series). When present:

- Homoscedasticity \(\Rightarrow\) Error (term) in our model are roughly equal

- The error variance should be constant across all levels of the independent variables. When violated (heteroscedasticity):

- Coefficient estimates remain unbiased, but they're no longer efficient (not minimum variance)

- Standard errors are wrong, leading to invalid hypothesis tests

- Predictions in high-variance regions are less reliable than the model suggests

- The error variance should be constant across all levels of the independent variables. When violated (heteroscedasticity):

[!TIP] Key insight:

Violations of assumptions 1 and 3 affect your estimates themselves. Violations of 2, 4, and 5 primarily affect your inference (standard errors, p-values, confidence intervals)—your predictions may still be reasonable, but your uncertainty quantification will be wrong.

Why it's a problem: - OLS gives equal weight to all observations - But observations in high-variance regions are less reliable - Standard errors become wrong → invalid confidence intervals and hypothesis tests

Two Ways to Compute Linear Regression Weights¶

There are two fundamental approaches to finding the optimal weights (coefficients) for linear regression:

| Approach | Method | Formula |

|---|---|---|

| Closed-form (Analytical) | Solve directly using linear algebra | \(\beta = (X^T X)^{-1} X^T y\) |

| Gradient Descent (Iterative) | Repeatedly update weights to minimize loss | \(w := w - \alpha \nabla J(w)\) |

Closed-Form Solution¶

The closed-form solution solves the normal equations directly. For different numbers of features:

| # Features | Closed-Form Solution | Derivation |

|---|---|---|

| 1 feature (simple LR) | \(\beta = \frac{Cov(X,Y)}{Var(X)}\) | Special case of the matrix formula |

| Many features (multiple LR) | \(\beta = (X^T X)^{-1} X^T y\) | General solution from normal equations |

📖 Connection: The single-feature formula \(\beta = \frac{Cov(X,Y)}{Var(X)}\) is not a separate "approach" — it's just what the matrix formula \((X^T X)^{-1} X^T y\) simplifies to when you have only one feature. See Why Does the OLS Slope Use Covariance? for the intuition.

When to Use Each Approach¶

| Approach | Use When | Why |

|---|---|---|

| Closed-form | Small-medium datasets (< 10K features) | Direct solution, no iterations needed |

| Closed-form | \((X^T X)\) is invertible | Required for matrix inversion |

| Gradient Descent | Large datasets (millions of rows) | Matrix inversion is \(O(n^3)\), expensive |

| Gradient Descent | \((X^T X)\) is singular or near-singular | Can't invert, but GD still works |

| Gradient Descent | Online/streaming data | Can update incrementally |

Key insight: Both approaches minimize the same objective function (sum of squared errors). They just differ in how they find the minimum: - Closed-form: Jumps directly to the answer (one-shot calculation) - Gradient Descent: Walks downhill step-by-step until reaching the minimum

Gradient Descent¶

One-liner: Iterative optimization algorithm that updates parameters in the direction of steepest descent (negative gradient) to minimize a cost function.

| Concept | What it does |

|---|---|

| Gradient Descent | The optimization algorithm - decides how to update weights |

| Backpropagation | The method to compute gradients in neural networks (chain rule) |

| Type | Data per Update | Use Case |

|---|---|---|

| Batch GD | All \(m\) samples | Small datasets, stable convergence |

| Stochastic GD | 1 sample | Online learning, very large datasets |

| Mini-batch GD | \(b\) samples (\(b \approx 32-128\)) | Most common - balances speed & stability |

Why Gradient Descent Might Fail to Converge:

| Reason | What Happens | Fix |

|---|---|---|

| Learning rate too high | Oscillates/diverges, jumps over minimum | Reduce α, use learning rate schedulers |

| Learning rate too small | Extremely slow convergence | Increase α, use adaptive methods (Adam) |

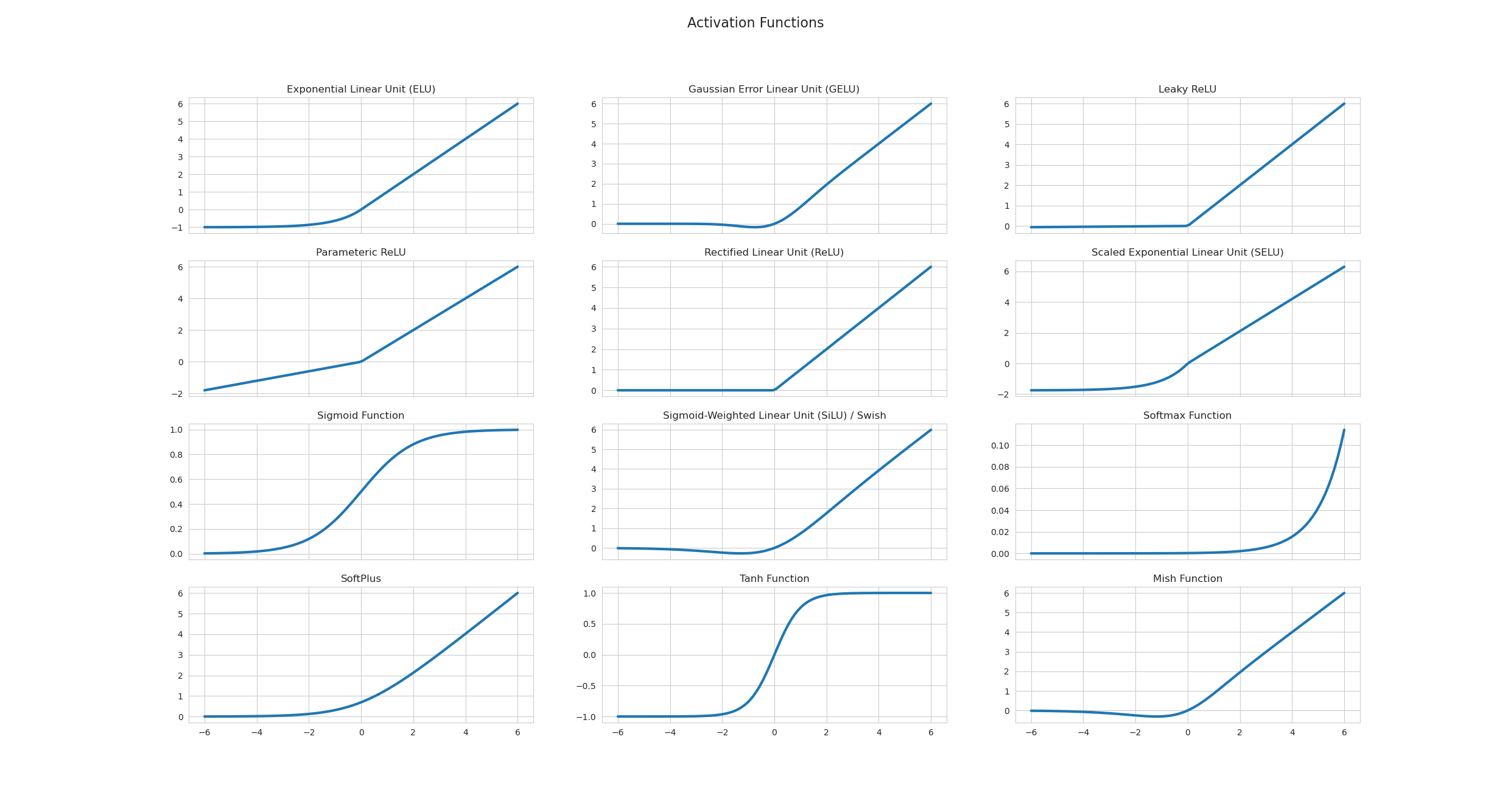

| Vanishing gradients | Gradients → 0 in deep networks (e.g., sigmoid extremes) → weights stop updating | Use ReLU, batch normalization, skip connections (see Activation Functions) |

| Exploding gradients | Gradients → huge values → weights become NaN | Gradient clipping, proper initialization |

| Local minima | Gets stuck in suboptimal minimum | Momentum, random restarts, SGD noise |

| Saddle points | Gradient = 0 but not a minimum (flat in some directions) | Momentum, Adam (adapts per-parameter) |

| Plateaus | Flat regions where gradient ≈ 0 | Learning rate warmup, momentum |

| Poor initialization | Start in bad region (e.g., all zeros → symmetric gradients) | Xavier/He initialization |

| Unscaled features | Elongated contours → zigzag path | Feature normalization/standardization |

| Noisy data | Oscillates around minimum | Larger batch size, gradient averaging |

[!WARNING] Backpropagation ≠ Gradient Descent (common mix-up!) - Backpropagation = method to compute gradients (chain rule in neural networks) - Gradient Descent = optimization algorithm that uses those gradients to update weights - For linear regression, you don't need backprop - you compute gradients directly. Backprop is specifically for neural networks with hidden layers.

Interview Answer Template:

"Gradient descent can fail to converge for several reasons: (1) Learning rate issues—too high causes oscillation, too small is very slow; (2) Gradient problems—vanishing gradients in deep networks with sigmoid, or exploding gradients causing NaN; (3) Loss surface issues—local minima, saddle points, or plateaus where gradient ≈ 0; (4) Data issues—unscaled features cause inefficient zigzag updates; (5) Poor weight initialization. We address these with techniques like Adam optimizer, batch normalization, proper initialization (Xavier/He), and gradient clipping."

Detailed Explanation¶

The Algorithm¶

Terms - Parameters/Weights: \(w_0, w_1\) - Learning Rate: \(\alpha\)

Concept - We have cost function \(J(w_0, w_1)\) - Goal: \(\min_{w_0, w_1} J(w_0, w_1)\) - Approach: - Start with random \(w_0, w_1\) - Iteratively update to reduce \(J\) until reaching a minimum

Update Rule (repeat until convergence): $\(w_j = w_j - \alpha \dfrac{\partial}{\partial w_j} J(w_0, w_1) \text{ for } j = 0, 1\)$

Gradient Descent for Linear Regression¶

- Model: \(h(x) = w_0 + w_1 x\)

- Cost function (MSE): \(J(w_0, w_1) = \dfrac{1}{2m} \sum_{i=1}^{m} (h(x^{(i)}) - y^{(i)})^2\)

Deriving the Gradients (Chain Rule)

For \(w_1\): $\(\frac{\partial J}{\partial w_1} = \frac{1}{m} \sum_{i=1}^{m} \underbrace{(h(x^{(i)}) - y^{(i)})}_{\text{outer}} \cdot \underbrace{x^{(i)}}_{\frac{\partial h}{\partial w_1}}\)$

For \(w_0\) (since \(\frac{\partial h}{\partial w_0} = 1\)): $\(\frac{\partial J}{\partial w_0} = \frac{1}{m} \sum_{i=1}^{m} (h(x^{(i)}) - y^{(i)})\)$

Update Rules - \(w_0 = w_0 - \alpha \dfrac{1}{m} \large\sum_{i=1}^{m} (h(x^{(i)}) - y^{(i)})\) - \(w_1 = w_1 - \alpha \dfrac{1}{m} \large\sum_{i=1}^{m} (h(x^{(i)}) - y^{(i)}) \cdot x^{(i)}\)

Types of Gradient Descent¶

Batch Gradient Descent - Use all \(m\) training samples in each step

Stochastic Gradient Descent (SGD) - Only one training example per iteration

Mini-batch Gradient Descent (most common) - Use \(b\) samples per iteration (\(b << m\), typically \(b = 32-128\)) - Parallelized computation: 1. Split the mini-batch across multiple GPUs/workers 2. Each worker computes gradients for its portion in parallel 3. Aggregate (sum/average) the gradients from all workers 4. Update the model parameters using the combined gradient - Why mini-batch is favored: 1. Memory constraints - full dataset doesn't fit in GPU memory 2. Faster convergence - more frequent parameter updates 3. Noise helps generalization - escapes sharp local minima

Bias-Variance Tradeoff¶

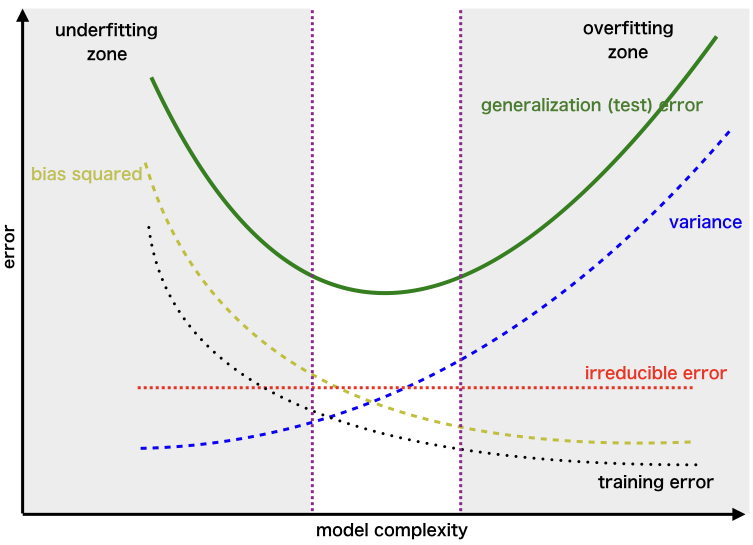

One-liner: Prediction error comes from three sources—bias (underfitting), variance (overfitting), and irreducible noise—and reducing one typically increases the other.

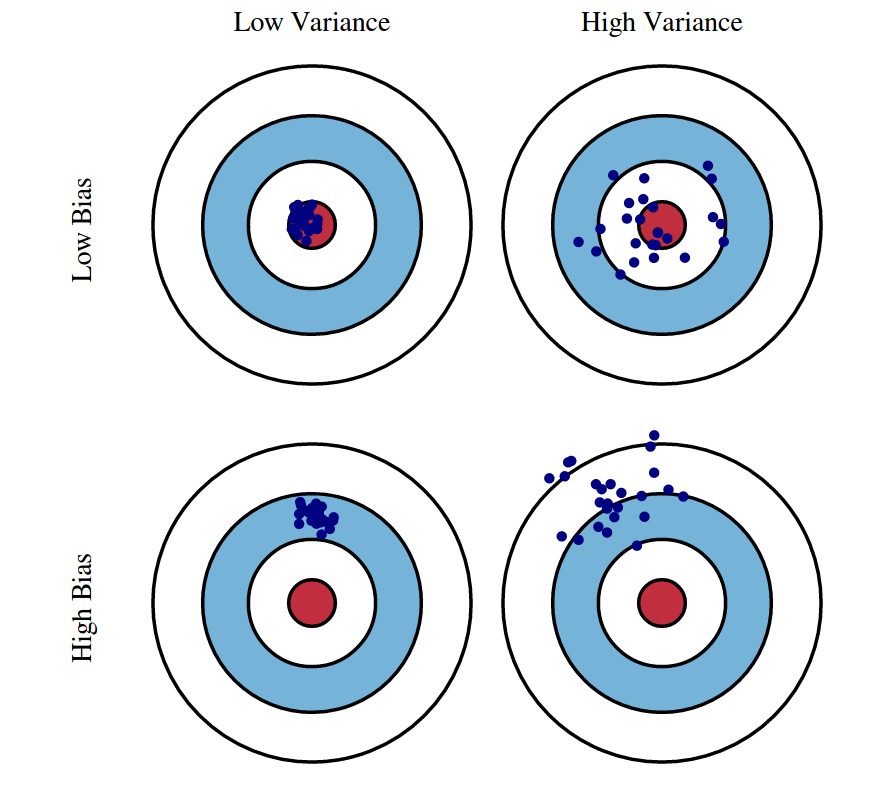

| Term | What it is | Caused by | Think of it as |

|---|---|---|---|

| Bias | Systematic error from wrong assumptions | Model too simple | "Too stubborn to learn" |

| Variance | Sensitivity to training data fluctuations | Model too complex | "Too flexible, learns noise" |

| Irreducible | Noise inherent in data | Nature of the problem | "Can't be reduced" |

| Model Complexity | Bias | Variance | Risk |

|---|---|---|---|

| Too Simple | High | Low | Underfitting |

| Too Complex | Low | High | Overfitting |

| Just Right | Balanced | Balanced | Optimal |

| To Reduce | Do This |

|---|---|

| Bias (underfitting) | More complex model, more features, less regularization, boosting |

| Variance (overfitting) | More data, simpler model, more regularization, bagging, dropout |

[!TIP] Key insight: You cannot minimize both simultaneously—this is the fundamental tradeoff. Regularization (L1/L2) explicitly controls this balance.

Interview Answer Template:

"The bias-variance tradeoff describes two sources of prediction error that work against each other. Bias is error from oversimplifying—like fitting a line to curved data. High bias means the model consistently misses the pattern (underfitting). Variance is error from being too sensitive to training data—like a high-degree polynomial that fits noise. High variance means the model won't generalize (overfitting). The tradeoff: simpler models have high bias but low variance; complex models have low bias but high variance. We aim for the complexity level that minimizes total error, often using cross-validation to find this balance and regularization to control it."

Detailed Explanation¶

The Three Error Components¶

1. Bias (Underfitting) - Error from wrong assumptions in the model (model too simple) - The model can't capture the true underlying pattern - Example: Fitting a straight line to curved data - High bias → consistently wrong predictions (systematic error)

2. Variance (Overfitting) - Error from sensitivity to fluctuations in training data (model too complex) - The model memorizes noise instead of learning the signal - Example: A high-degree polynomial that fits every training point perfectly - High variance → predictions change wildly with different training sets

3. Irreducible Error - Noise inherent in the data itself - Cannot be reduced by any model - Represents the limit of how good any model can be

Visual Intuition: Dart Board Analogy¶

High Bias, Low Variance: High Variance, Low Bias: Ideal (Low Both):

╭───────╮ ╭───────╮ ╭───────╮

│ ◎ │ ← target │ ◎ │ │ ◎ │

│ •••• │ ← darts │• • •│ │ ••• │

│ •••• │ (clustered │ • • │ │ ••• │

╰───────╯ but off-center) ╰───────╯ ╰───────╯

(scattered but (clustered

centered on avg) at center)

Connection to Regularization¶

- L1/L2 regularization explicitly manages this tradeoff by adding a penalty for model complexity

- Higher regularization → more bias, less variance

- Lower regularization → less bias, more variance

Mathematical Derivation: Why Bias²?¶

Understanding the Key Quantities

| Symbol | What it is | Type |

|---|---|---|

| ŷ | A single prediction from your model | Random variable (depends on training data) |

| E[ŷ] | Average prediction across all possible training sets | Theoretical constant* |

| E[(ŷ-y)²] | Mean Squared Error (MSE) | Theoretical constant* |

Key insight: ŷ is random (changes each time you retrain); E[ŷ] and MSE are theoretical constants determined by your learning algorithm + data distribution. They describe "what would happen on average if you trained infinite models on infinite samples from the same population." Like how a fair coin's E[flip] = 0.5 is fixed—it's a property of the coin, not any single flip.

Concrete Example (house price, true value y = $500K):

| Training Set | ŷ (prediction) | (ŷ - y)² |

|---|---|---|

| Sample 1 | $480K | 400 |

| Sample 2 | $520K | 400 |

| Sample 3 | $510K | 100 |

| Sample 4 | $490K | 100 |

| Sample 5 | $500K | 0 |

→ E[ŷ] = $500K, E[(ŷ-y)²] = 200

The MSE Decomposition

Start with: \(E[(\hat{y} - y)^2]\)

Trick: Add and subtract \(E[\hat{y}]\):

Expand using \((a + b)^2 = a^2 + 2ab + b^2\) where \(a = (\hat{y} - E[\hat{y}])\) and \(b = (E[\hat{y}] - y)\):

The three terms:

- First term → \(E[(\hat{y} - E[\hat{y}])^2]\) = Variance (by definition)

- Third term → \((E[\hat{y}] - y)^2\) = Bias² (constants, E[] does nothing)

- Middle term → vanishes because \(E[\hat{y} - E[\hat{y}]] = 0\)

Result: $\(\text{MSE} = \text{Bias}^2 + \text{Variance} + \sigma^2_\epsilon\)$

| Term | Measures | Plain English |

|---|---|---|

| Bias² = \((E[\hat{y}] - y)^2\) | Distance from average prediction to truth | "On average, how far off am I?" |

| Variance = \(E[(\hat{y} - E[\hat{y}])^2]\) | Spread of predictions around their average | "How inconsistent are my predictions?" |

| \(\sigma^2_\epsilon\) | Irreducible noise in data: \(y = f(x) + \epsilon\) | "Noise I can't eliminate" |

Interview Answer (if asked "why squared?"):

"The squared term comes from the MSE decomposition. Bias measures systematic error—how far the expected prediction is from the true value. Since we use squared error as our loss function, bias gets squared. This also means bias and variance are on the same scale and can be directly compared."

Overfitting¶

One-liner: Overfitting occurs when a model learns the training data too well (including noise), resulting in high training performance but poor generalization to unseen data.

| Aspect | Overfitting | Underfitting |

|---|---|---|

| Training performance | High ✓ | Low ✗ |

| Test/validation performance | Low ✗ | Low ✗ |

| Bias-variance | Low bias, high variance | High bias, low variance |

| Model complexity | Too complex | Too simple |

| What it learns | Signal + noise | Neither well |

Detection¶

| Method | What to look for |

|---|---|

| Train vs. validation gap | Training accuracy >> validation accuracy |

| Learning curves | Validation loss starts increasing while training loss keeps decreasing |

| Cross-validation | High variance in scores across folds |

Handling Techniques¶

| Technique | How it helps | Category |

|---|---|---|

| More training data | Harder to memorize; forces learning general patterns | Data |

| Data augmentation | Artificially expands training set (rotations, crops, noise) | Data |

| Cross-validation | Better generalization estimate; detects overfitting earlier | Validation |

| Early stopping | Stop training when validation loss stops improving | Training |

| Regularization (L1/L2) | Penalizes large weights; constrains model capacity | Model |

| Dropout (neural nets) | Randomly zeros neurons; prevents co-adaptation | Model |

| Reduce model complexity | Fewer layers/neurons, simpler model family | Model |

| Batch normalization | Adds regularization effect; stabilizes training | Model |

| Pruning (trees/NNs) | Remove branches/weights that don't improve validation | Model |

| Ensemble methods | Combine models to reduce variance (bagging) | Model |

| Feature selection | Remove noisy/irrelevant features | Features |

[!TIP] Bias-variance connection: Overfitting = high variance, low bias. All these techniques trade off some bias (slightly worse training fit) for lower variance (better generalization).

Interview Answer Template¶

"How do you detect and handle overfitting?"

"I detect overfitting when training performance is significantly better than validation/test performance — the model has memorized the training data instead of learning general patterns.

To address it, I consider three categories of fixes: 1. Data: get more data or use data augmentation 2. Model: simplify the model — fewer parameters, regularization (L1/L2), dropout 3. Training: use early stopping with cross-validation to find the right stopping point

The specific approach depends on whether I'm data-limited or model-complexity-limited."

Cross-Validation¶

One-liner: Cross-validation is a technique to evaluate model generalization by systematically rotating which portion of data is used for training vs. testing, providing a more reliable performance estimate than a single train/test split.

Why Cross-Validation?¶

| Problem with single split | How CV helps |

|---|---|

| Overoptimizing on test set | Uses held-out test set only at the end |

| Lucky/unlucky split | Averages over multiple splits |

| Wasted data (small datasets) | Every sample used for both train and test |

| High variance in estimate | Multiple folds reduce variance |

Types of Cross-Validation¶

| Type | Description | Use case |

|---|---|---|

| K-Fold | Split into K parts, rotate through | Most common (K=5 or 10) |

| Stratified K-Fold | K-fold preserving class distribution | Imbalanced classification |

| Leave-One-Out (LOO) | K = N (each sample is a fold) | Very small datasets |

| Leave-One-Group-Out (LOGO) | Leave one subject/site out | Medical, multi-site studies |

| Time Series Split | Train on past, test on future | Temporal data (no shuffling!) |

Train/Validation/Test Split Ratios¶

| Dataset Size | Train | Validation | Test | Rationale |

|---|---|---|---|---|

| Small (<10K) | 60% | 20% | 20% | Need enough in each split |

| Medium (10K-100K) | 70-80% | 10-15% | 10-15% | Standard approach |

| Large (100K+) | 90-98% | 1-5% | 1-5% | Even 1% = thousands of samples |

| Massive (millions) | 98-99% | 0.5-1% | 0.5-1% | Don't waste training data |

[!TIP] Key insight: Validation/test sets need to be large enough for statistical significance (~5K-10K samples), not a fixed percentage. With massive data, even 1% is plenty.

[!WARNING] Time series trap: Never shuffle time series data in CV — future data leaking into training is a cardinal sin. Use chronological splits only.

Why LOGO Matters for Medical/Multi-Site ML¶

If you train on Patient A's scans and test on Patient A's other scans, the model might just learn "what Patient A looks like" rather than the disease pattern. LOGO ensures the model has never seen that patient/institution during training — a harder but more realistic generalization test.

Interview Answer Template¶

"What is cross-validation and why use it?"

"Cross-validation helps us get a reliable estimate of how well our model generalizes to unseen data.

The problem with a single train/test split is we might overfit to that specific test set, or get a lucky/unlucky split. With K-fold CV, we rotate which portion is held out, train K models, and average the results — giving us a more stable performance estimate.

For hyperparameter tuning, I use a three-way split: train on training set, tune hyperparameters using validation performance, and only touch the test set at the very end for final evaluation.

The split ratios depend on dataset size — with millions of samples, even 1% for validation gives 10K+ samples, which is statistically sufficient."

Bayesian Optimization¶

One-liner: A sample-efficient method for hyperparameter tuning that learns from previous attempts to intelligently decide where to search next, rather than randomly guessing or exhaustively trying everything.

| Approach | Strategy | Trials Needed | Best For |

|---|---|---|---|

| Grid Search | Try all combinations | Exponential | Few params, small ranges |

| Random Search | Sample randomly | 50-100+ | Better than grid, simple |

| Bayesian Optimization | Learn & adapt | 20-50 | Expensive trials (hours/days) |

Core Components:

| Component | What It Does | Common Choice |

|---|---|---|

| Surrogate model | Predicts score + uncertainty for untried points | Gaussian Process (GP) |

| Acquisition function | Decides where to sample next | Expected Improvement (EI) |

| Search space | Defines hyperparameter ranges | Continuous, categorical, mixed |

How It Works:

┌─────────────────────────────────────────────────────────────┐

│ 1. SURROGATE MODEL │

│ Build a probabilistic model of: hyperparams → score │

│ (Gaussian Process gives prediction AND uncertainty) │

│ │

│ 2. ACQUISITION FUNCTION │

│ "Where should I sample next?" │

│ Balances: exploitation (near known good points) │

│ vs exploration (uncertain regions) │

│ │

│ 3. UPDATE & REPEAT │

│ Train with new point, update surrogate, repeat │

└─────────────────────────────────────────────────────────────┘

Acquisition Functions:

| Function | Strategy | Intuition |

|---|---|---|

| Expected Improvement (EI) | Expected gain over current best | "How much better might this be?" |

| Upper Confidence Bound (UCB) | Prediction + uncertainty bonus | Optimistic exploration |

| Probability of Improvement (PI) | Chance of beating best | Conservative, exploitation-heavy |

When to Use:

| Use Bayesian Optimization | Don't Bother |

|---|---|

| Each trial is expensive (hours/days) | Each trial is fast (seconds) |

| Limited budget (10-100 trials) | Can afford 1000s of trials |

| Continuous/smooth search space | Highly discrete or conditional |

| Moderate dimensions (< 20 params) | Very high dimensional (100s) |

[!TIP] Why "Bayesian"? The Gaussian Process maintains a probability distribution over possible functions, not just a single guess. Each new data point updates the belief (posterior) about where good values might be — this is Bayesian inference. The uncertainty estimate is the key advantage.

Tools:

| Tool | Notes |

|---|---|

| Optuna | Most popular, easy API, supports pruning |

| Hyperopt | Older, TPE algorithm (tree-based) |

| W&B Sweeps | Integrated with experiment tracking |

| Ray Tune | Distributed, supports many algorithms |

Optuna Example¶

import optuna

def objective(trial):

# Define hyperparameter search space

lr = trial.suggest_float("lr", 1e-5, 1e-1, log=True)

n_layers = trial.suggest_int("n_layers", 1, 5)

dropout = trial.suggest_float("dropout", 0.1, 0.5)

# Train model with these hyperparams

model = train_model(lr=lr, n_layers=n_layers, dropout=dropout)

# Return metric to optimize

return model.validation_accuracy

# Run optimization

study = optuna.create_study(direction="maximize")

study.optimize(objective, n_trials=50)

print(f"Best params: {study.best_params}")

print(f"Best accuracy: {study.best_value}")

Interview Answer Template:

"Bayesian optimization is a sample-efficient method for hyperparameter tuning. It builds a surrogate model — usually a Gaussian Process — that predicts both performance AND uncertainty for untried configurations. An acquisition function like Expected Improvement then balances exploitation (sampling near known good points) with exploration (sampling uncertain regions). This is much more efficient than grid or random search when each trial is expensive — typically finding good hyperparameters in 20-50 trials instead of hundreds. I usually use Optuna in practice because it has a clean API and supports early stopping of bad trials."

Feature Engineering¶

One-liner: Feature engineering transforms raw input data into meaningful representations that help models learn more effectively — it's how you inject domain knowledge into ML.

Why Feature Engineering Matters¶

| Reason | Example |

|---|---|

| Better model performance | price_per_sqft is more predictive than raw price and area separately |

| Inject domain knowledge | "Recency matters" → create days_since_last_purchase |

| Handle data types | Convert text/images/categories to numerical features |

| Capture non-linearity | Polynomial features for linear models |

| Reduce noise | Aggregate noisy signals into stable features |

Feature Engineering Techniques¶

| Category | Techniques | Example |

|---|---|---|

| Numerical transformations | Log, sqrt, Box-Cox, binning | log(income) for skewed data |

| Interactions | Multiply/divide features | speed = distance / duration |

| Polynomial | Square, cube terms | age² for non-linear age effects |

| Cyclical encoding | Sine/cosine transformation | sin(2π × hour/24), cos(2π × hour/24) |

| Date/time extraction | Extract components | day_of_week, is_weekend, is_holiday |

| Recency features | Time since event | days_since_last_login, hours_since_last_order |

| Categorical encoding | One-hot, target, frequency encoding | Convert "red/blue/green" to numbers |

| Text features | TF-IDF, embeddings, n-grams | Document vectors from raw text |

| Aggregations | Group-by statistics | avg_trips_per_day, total_spend_last_7_days |

| Window features | Rolling statistics | last_7_days_avg, last_50_trips_count |

| Domain-specific | Business logic | RFM (Recency, Frequency, Monetary) |

Feature Engineering by Model Type¶

| Technique | Linear Models | Tree-Based Models | Neural Networks |

|---|---|---|---|

| Feature scaling | ✅ Required | ❌ Not needed (scale-invariant) | ✅ Required (helps gradient descent converge) |

| Polynomial features | ✅ Helpful (can't learn non-linearity) | ❌ Unnecessary (trees split naturally) | ❌ Unnecessary (learns non-linearity via activations) |

| Interaction features (A×B) | ✅ Must create manually | ❌ Trees capture automatically | ❌ Learns automatically (hidden layers) |

| Binning continuous vars | Sometimes useful | ❌ Unnecessary (trees bin at every split) | ❌ Unnecessary (but embeddings for high-cardinality categoricals help) |

| One-hot encoding | ✅ Standard approach | ⚠️ Ordinal/target encoding often better | ⚠️ Embeddings preferred for high cardinality |

| Manual feature engineering | ✅ Critical for performance | ✅ Helpful but less critical | ⚠️ Less critical (can learn representations) |

💡 Key insights: - Linear models need you to engineer non-linearity (polynomials, interactions, binning) - Tree-based models discover non-linearity through splits — they bin at every node - Neural networks learn representations automatically via hidden layers, but still benefit from scaling and domain features

⚠️ Important caveat for neural networks: Even though NNs can learn representations automatically, you still need domain knowledge to decide what data to feed in. The network can only learn from features you provide — it won't magically know that "days since last purchase" matters if you only give it raw timestamps. Feature selection and knowing what signals exist still requires human insight.

Cyclical Encoding (Why Sine/Cosine?)¶

For cyclical features like hour (0-23) or day of week (0-6), raw numbers create false distances: - Hour 23 and Hour 0 are numerically far (23 vs 0) but temporally adjacent (1 hour apart)

Solution: Map to a circle using sine and cosine:

Now Hour 23 and Hour 0 are close in the transformed space!

Interview Answer Template¶

"What is feature engineering and why is it important?"

"Feature engineering is transforming raw input data into meaningful representations that help models learn more effectively. It's one of the most important parts of the ML pipeline.

It matters for several reasons: it can dramatically improve model performance, it lets us inject domain knowledge the model might not discover on its own, and it handles data types like text or categories that models can't use directly.

Common techniques include: numerical transformations like log for skewed data, interaction features like price_per_sqft, cyclical encoding with sine/cosine for time features, aggregations like average_spend_last_7_days, and text processing like TF-IDF or embeddings.

One important consideration: linear models need more feature engineering — polynomials, interactions, scaling — because they can't capture non-linearity on their own. Tree-based models need less because they naturally bin data and capture interactions through splits."

Class Imbalance¶

One-liner: Class imbalance occurs when one class significantly outnumbers another (e.g., 99% normal, 1% fraud), causing models to be biased toward the majority class.

Why Class Imbalance Is a Problem¶

| Issue | Example |

|---|---|

| Accuracy is misleading | 99% accuracy by always predicting "not fraud" |

| Model ignores minority class | Gradient updates dominated by majority class |

| Poor real-world performance | Fails at the task that actually matters (detecting fraud) |

Handling Techniques¶

| Level | Technique | How It Works |

|---|---|---|

| Data-level | Undersampling | Remove majority class samples |

| Oversampling | Duplicate minority class samples | |

| SMOTE | Create synthetic minority samples | |

| Algorithm-level | Class weights | Penalize minority class errors more in loss function |

| Threshold tuning | Lower decision threshold (e.g., 0.3 instead of 0.5) | |

| Ensemble | Balanced Random Forest | Undersample differently per tree |

| EasyEnsemble | Train multiple models on balanced subsets | |

| Other | Anomaly detection | Treat minority as anomalies (Isolation Forest, Autoencoders) |

| Collect more data | Sometimes the best solution! |

Undersampling vs Oversampling¶

| Aspect | Undersampling | Oversampling (SMOTE) |

|---|---|---|

| Data size | Decreases (loses information) | Increases |

| Training time | Faster | Slower |

| Risk | Lose important majority patterns | Overfitting to synthetic samples |

| When to prefer | Very large datasets, noisy majority class | Small/medium datasets, need all info |

How SMOTE Works¶

For each minority sample:

1. Find k nearest neighbors (typically k=5) in feature space

2. Randomly pick one neighbor

3. Create synthetic point on the line between them

Original: A ●─────────────● B (both minority class)

↓

Synthetic: A ●─────◆───────● B (new sample on the line)

Formula: synthetic = A + random(0,1) × (B - A)

Threshold Tuning Direction¶

| Goal | Move Threshold | Effect |

|---|---|---|

| Catch more minority (fraud, disease) | Lower (e.g., 0.3) | More positive predictions → higher recall |

| Reduce false positives | Higher (e.g., 0.7) | Fewer positive predictions → higher precision |

⚠️ Common mistake: For imbalanced problems where catching minority matters, lower the threshold (not raise it).

Evaluation Metrics for Imbalanced Data¶

| Metric | Good for Imbalanced? | Why |

|---|---|---|

| Accuracy | ❌ Bad | 99% by predicting all majority |

| AUC-ROC | ⚠️ Can be misleading | Includes easy-to-get True Negatives |

| AUC-PR (AUPRC) | ✅ Better | Focuses on minority class performance |

| F1 / F-beta | ✅ Good | Balances precision and recall |

| Balanced Accuracy | ✅ Good | Average of recall per class |

| Precision @ k | ✅ Good | "Of top k predictions, how many correct?" |

💡 Key insight: AUPRC is preferred over AUC-ROC for severe imbalance because it doesn't get inflated by easy True Negatives.

Interview Answer Template¶

"How do you handle class imbalance?"

"I approach class imbalance at multiple levels:

At the data level, I can undersample the majority class or oversample the minority. SMOTE is a popular oversampling technique that creates synthetic samples by interpolating between existing minority samples and their neighbors.

At the algorithm level, I can adjust class weights to penalize minority class errors more heavily in the loss function. I can also tune the decision threshold — lowering it from 0.5 to say 0.3 to catch more minority cases at the cost of more false positives.

For evaluation, I avoid accuracy since it's misleading. Instead I use metrics like F1 score, balanced accuracy, or area under the precision-recall curve (AUPRC), which focus on minority class performance.

The choice between undersampling and oversampling depends on dataset size — undersampling is fine for huge datasets with noisy majority class, while oversampling preserves more information for smaller datasets."

Data Drift & Bias¶

One-liner: Data drift refers to changes in data distributions over time (causing model degradation), while data bias refers to systematic errors in data collection that make training data unrepresentative.

Temporal Drift (Distribution Shifts Over Time)¶

| Type | What Changes | Example | Detection |

|---|---|---|---|

| Label Drift | Meaning or distribution of labels | New categories emerge (EV charging issues); label definitions shift | Monitor prediction distribution, label frequency changes |

| Feature Drift | Input feature distributions | Chat slang evolves, new emojis, regional language patterns shift | Track feature statistics, PSI/KL divergence on inputs |

| Concept Drift | Relationship between features and labels (P(y|X)) | "Same day delivery" now means 2 hours, not 12 hours | Monitor model performance metrics, not just distributions |

[!WARNING] Interview trap: Feature drift ≠ Concept drift! - Feature drift: Input distribution changes but relationship X→y is the same - Concept drift: What counts as "positive" changes even if inputs look similar

Data Bias (Systematic Collection Errors)¶

| Type | What It Is | Example | Mitigation |

|---|---|---|---|

| Selection Bias | Training data not representative of production | Only training on escalated chats, missing "easy" resolved issues | Stratified sampling, collect from all user segments |

| Survivorship Bias | Only data that "survived" some filter | Training on completed orders—missing cancelled order patterns | Include negative/incomplete cases explicitly |

| Temporal Bias | Training data from unrepresentative time period | Training on holiday data, deploying in regular season | Use diverse time windows, recent data weighting |

| Labeling Bias | Inconsistent or biased human annotations | Different annotators have different thresholds for "urgent" | Clear guidelines, inter-annotator agreement checks |

Drift Detection & Monitoring¶

| Method | What It Measures | When to Use |

|---|---|---|

| PSI (Population Stability Index) | Distribution shift magnitude | Continuous monitoring of categorical/binned features |

| KL Divergence | Information-theoretic distance between distributions | Feature drift detection |

| Performance Metrics | Accuracy, F1, AUC trends | Concept drift (relationship changed) |

| Prediction Distribution | Shift in model output distribution | Quick proxy when ground truth delayed |

PSI Formula:

How to calculate: 1. Bin your feature (e.g., age: 0-20, 20-40, 40-60, 60+) 2. Calculate % in each bin for baseline (training) and current (production) 3. Apply formula for each bin, sum them up

Worked example:

| Age Bin | Training (E) | Production (A) | (A-E) | ln(A/E) | Contribution |

|---|---|---|---|---|---|

| 0-20 | 10% | 15% | 0.05 | 0.41 | 0.020 |

| 20-40 | 50% | 40% | -0.10 | -0.22 | 0.022 |

| 40-60 | 30% | 35% | 0.05 | 0.15 | 0.008 |

| 60+ | 10% | 10% | 0.00 | 0.00 | 0.000 |

| Total | PSI | 0.050 ✅ |

PSI Interpretation: | PSI Value | Interpretation | |-----------|----------------| | < 0.1 | ✅ No significant shift | | 0.1 - 0.25 | ⚠️ Moderate shift, investigate | | > 0.25 | 🚨 Significant shift, likely retraining needed |

Other drift metrics:

| Metric | Best For | Pros | Cons |

|---|---|---|---|

| PSI | Categorical / binned | Easy to interpret, symmetric, industry standard | Requires binning continuous features |

| KL Divergence | Continuous | Information-theoretic | Asymmetric, undefined if Q(x)=0 |

| KS Test | Continuous | Gives p-value, no binning | Less intuitive than PSI |

| Jensen-Shannon | Any | Symmetric, bounded [0,1] | Less common in industry |

[!WARNING] Key insight: PSI detects feature drift (input distribution), not concept drift (relationship change). A model can have PSI ≈ 0 but still degrade if business rules changed. Monitor both PSI and performance metrics.

Anomaly Detection vs Drift Detection — Know the difference:

| Aspect | Anomaly Detection (Datadog) | Drift Detection (PSI) |

|---|---|---|

| Monitors | Single metric over time (QPS, latency) | Distribution shape across population |

| Question | "Is this value unusual vs recent history?" | "Is current population different from training?" |

| Baseline | Rolling (last hour/day/week) | Fixed (training data) |

| Granularity | Real-time (per minute) | Batch (hourly/daily) |

ANOMALY DETECTION DRIFT DETECTION

───────────────── ───────────────

QPS over time Age distribution

│ Training Production

│ 🚨 spike! │████ │██████

│ ╱╲ │██████ │████

│ ╱ ╲ │████ │██

│____╱ ╲____ │██ │████

└──────────────▶ time 0-20 20-40 40-60 60+

"Value jumped 3x in 5 min" "Distribution shifted right"

Critical difference — gradual drift:

Week 1: avg_age = 30 ─┐

Week 2: avg_age = 31 │ Anomaly baseline shifts with data

Week 3: avg_age = 32 │ → No alert triggered!

... │

Week 20: avg_age = 50 ─┘ Still "normal" vs recent, but VERY different from training!

[!TIP] Interview phrasing: "Anomaly detection asks 'is now different from recent?' while drift detection asks 'is now different from training?' Gradual drift fools anomaly detection because the baseline shifts with it—that's why ML monitoring needs both Datadog for operational anomalies and PSI for model validity."

Multiple Baseline Strategy (Production-Grade):

In practice, use PSI with multiple baselines at different timescales:

| Baseline | Compares | Catches | Typical Threshold |

|---|---|---|---|

| Training vs Today | Fixed baseline | Gradual long-term drift | PSI > 0.25 |

| Last week vs Today | Rolling window | Recent changes | PSI > 0.15 |

| Yesterday vs Today | Very recent | Sudden anomalies | PSI > 0.10 |

┌─────────────────┐ ┌─────────────────┐ ┌─────────────────┐

│ LONG-TERM │ │ MID-TERM │ │ SHORT-TERM │

│ Training vs Now │ │ Last wk vs Now │ │ Yesterday vs Now│

├─────────────────┤ ├─────────────────┤ ├─────────────────┤

│ PSI > 0.25? │ │ PSI > 0.15? │ │ PSI > 0.10? │

│ → "Model stale" │ │ → "Something │ │ → "Sudden shift │

│ │ │ changed" │ │ investigate" │

└─────────────────┘ └─────────────────┘ └─────────────────┘

What to monitor with multiple baselines:

| Metric | Why Multiple Baselines Help |

|---|---|

| Feature values | Catch input drift at different timescales |

| Prediction scores | Detect model behavior changes (sudden vs gradual) |

| % predicted positive | Class distribution shift (e.g., fraud rate jumped) |

| Score distribution | Confidence calibration drift |

Example — % Predicted Positive:

Training: 35% fraud ─┐

Last week: 36% fraud │ PSI vs training = 0.02 ✅

Today: 52% fraud ─┘ PSI vs training = 0.18 ⚠️

PSI vs last week = 0.31 🚨 ← Catches sudden jump!

[!TIP] Interview nuance: "We use multiple PSI baselines—training baseline catches 'has the model drifted too far to be valid?' while weekly baseline catches 'did something break recently?' Different thresholds too: we tolerate more drift from training (expected over time) than from last week (unexpected)."

Production Monitoring Loop¶

Monitor → Detect → Diagnose → Collect → Relabel → Retrain

│ │ │ │ │ │

└─ DD dashboards └─ Root cause └─ Affected samples └─ Deploy

prediction dist analysis for labeling new model

3D Mnemonic — Detect, Diagnose, Deploy: The core philosophy simplifies to three stages: detect the drift, diagnose the root cause, deploy the fix. This maps to established industry frameworks:

| Framework | Domain | Stages | ML Drift Mapping |

|---|---|---|---|

| OODA Loop | Military/Decision | Observe → Orient → Decide → Act | Monitor → Analyze → Plan → Retrain |

| PDCA | Quality (Deming) | Plan → Do → Check → Act | Design → Deploy → Monitor → Iterate |

| DDR | Incident Response | Detect → Respond → Recover | Detect drift → Investigate → Retrain |

| MAR | SRE/DevOps | Monitor → Alert → Remediate | Dashboard → Threshold → Fix |

[!TIP] Interview flex: "Our drift response follows the classic Detect-Diagnose-Deploy loop—similar to OODA in military decision-making or PDCA in quality engineering. The key is having the instrumentation (logging) in place so you can close the loop quickly."

💡 Key insight: Log all model inputs/outputs. This enables you to: (1) detect drift via distribution monitoring, (2) pull affected samples for diagnosis, (3) collect retraining data without new instrumentation.

Interview Answer Template¶

"How do you handle model drift in production?"

"I approach drift at multiple stages:

For detection, I monitor prediction distributions in dashboards—if my model suddenly classifies 40% as 'payment issues' when it's usually 20%, that's a signal. I also track feature statistics using PSI to catch input drift early.

For diagnosis, I log all inputs and outputs, so when drift is detected, I can pull affected samples and analyze whether it's feature drift, label drift, or true concept drift.

For mitigation, my retraining policy triggers on observed drift or performance degradation. Since I've logged the data, I can collect affected samples, relabel them if needed, and retrain the model.

The key is instrumenting logging upfront—it costs little but enables the entire monitor-detect-retrain loop."

Feature Drift vs Concept Drift Deep Dive¶

Feature Drift Example (P(X) changes, P(y|X) same): - Users start using more emojis in chat - The distribution of inputs changed - But "😡 my order was cancelled" still means the same intent - Fix: Retrain on new data, model learns new patterns for same labels

Concept Drift Example (P(y|X) changes): - Company policy changes: "refund eligible" threshold lowered - Same chat message "delivery was 5 mins late" now gets different label - The meaning of labels changed - Fix: Need new labels reflecting new policy, not just more data

When to Retrain¶

| Signal | Type | Action |

|---|---|---|

| Performance metrics dropping | Concept drift likely | Priority retrain |

| Feature PSI > 0.25 | Feature drift | Collect new samples, retrain |

| Prediction distribution shift | Unknown cause | Investigate before retraining |

| New category/edge cases appearing | Label drift | Expand label taxonomy, collect examples |

Logistic Regression¶

One-liner: Linear model for classification using sigmoid function to output probabilities.

[!TIP] A technique to adapt linear regression to make classifications.

For classification, - \(y=0\) or \(1\) \(\Rightarrow 0 \le h(x) \le 1\) (for Logistic Regression) - But, for linear regression, \(h(x)\) can be \(<0\) or \(>1\) - Therefore, we introduce a non-linear function (e.g., sigmoid) to bound the output between 0 and 1 - \(g(z) = \large\frac{1}{1+e^{-z}}=\Large\frac{e^{z}}{1+e^{z}}\) where \(z = w_{0} + w_{1}x_{1} + ...\)

Another way of interpreting it (log-odds perspective),

The Problem: We want to predict probability \(p \in (0, 1)\), but linear regression outputs \(z \in (-\infty, +\infty)\). We need a bridge between these two ranges.

What are Odds? - Odds express how likely something is to happen compared to it not happening - \(odds = \dfrac{p}{1-p} = \dfrac{\text{chance of happening}}{\text{chance of NOT happening}}\) - Examples: - Fair coin: \(p=0.5\), \(odds = 0.5/0.5 = 1\) ("even odds" — 1 success per 1 failure) - 80% chance of rain: \(p=0.8\), \(odds = 0.8/0.2 = 4\) ("4 to 1 odds" — 4 rainy days per 1 dry day) - Rolling a 6: \(p=1/6\), \(odds = (1/6)/(5/6) = 0.2\) ("1 to 5 odds" — 1 success per 5 failures)

Why Log-Odds? — The Bridge

| Transformation | Range | Problem? |

|---|---|---|

| \(p\) (probability) | \((0, 1)\) | Bounded both sides |

| \(\dfrac{p}{1-p}\) (odds) | \((0, \infty)\) | Still bounded on left |

| \(\log\dfrac{p}{1-p}\) (log-odds) | \((-\infty, +\infty)\) | Unbounded — matches linear output! |

Log-odds transforms probability into an unbounded range that matches what a linear model can output.

The Core Assumption of Logistic Regression

We assume that log-odds is a linear function of features: - \(\log\dfrac{p}{1-p} = w_{0} + w_{1}x_{1} + ... = z\)

This is a modeling choice: "I believe the log-odds of the outcome varies linearly with the input features."

Deriving the Sigmoid (Inverse of Log-Odds)

Starting from \(z = \log\frac{p}{1-p}\), solve for \(p\): 1. \(e^{z} = \frac{p}{1-p}\) 2. \(e^{z}(1-p) = p\) 3. \(e^{z} = p + p \cdot e^{z} = p(1 + e^{z})\) 4. \(p = \dfrac{e^{z}}{1+e^{z}} = \dfrac{1}{1+e^{-z}}\) ← This is the sigmoid function!

The sigmoid is not arbitrary — it's the mathematical inverse that recovers probability from log-odds.

Summary Table

| \(p\) | \(odds = \dfrac{p}{1-p}\) | \(\log(odds)\) | Meaning |

|---|---|---|---|

| 0 | 0 | \(-\infty\) | Event never happens |

| 0.5 | 1 | 0 | 50-50 chance |

| 1 | \(\infty\) | \(+\infty\) | Event always happens |

Interpreting Coefficients

If coefficient \(w_1 = 0.5\), increasing \(x_1\) by 1 unit: - Increases log-odds by 0.5 - Multiplies odds by \(e^{0.5} \approx 1.65\) (65% increase in odds)

Training Logistic Regression with Cross-Entropy Loss¶

📖 See also: Loss Functions for comprehensive coverage of Cross-Entropy vs MSE and when to use each.

Logistic Regression is trained using Cross-Entropy (Log Loss) as the loss function:

The training loop (Gradient Descent): 1. Forward pass: Compute predictions \(\hat{p} = \sigma(Xw)\) (probabilities via sigmoid) 2. Compute loss: Calculate Cross-Entropy loss \(L\) 3. Backward pass: Compute gradients \(\frac{\partial L}{\partial w}\) 4. Update weights: \(w = w - \alpha \cdot \frac{\partial L}{\partial w}\)

Why Cross-Entropy for classification (not MSE)?

| Aspect | Cross-Entropy | MSE for Classification |

|---|---|---|

| Gradient behavior | Strong gradients when wrong | Weak gradients when \(\hat{p}\) near 0 or 1 |

| Probabilistic interpretation | Yes (maximizes likelihood) | No |

| Convergence | Faster | Slower, can get stuck |

Example: If true label \(y=1\) and prediction \(\hat{p}=0.01\) (very wrong): - Cross-Entropy loss: \(-\log(0.01) = 4.6\) (high loss, strong gradient) - MSE loss: \((1 - 0.01)^2 = 0.98\) (high loss, but gradient of sigmoid is tiny near 0)

Key insight: Cross-Entropy pairs naturally with sigmoid because the gradient simplifies to \((\hat{p} - y)\), giving strong learning signals even when predictions are confidently wrong.

KNN¶

One-liner: Classify based on nearest neighbors in feature space — simple, non-parametric, no training step.

KNN (K-Nearest Neighbors) is a simple, instance-based learning algorithm that makes predictions based on similarity to nearby training examples (i.e., \(k\) nearest neighbours).

How it works: 1. For a new data point, find the K closest training examples (using distance metrics like Euclidean) 2. For classification: Take majority vote of the K neighbors' classes 3. For regression: Take average of the K neighbors' values

Key characteristics: - Lazy learning: ⚠️ No explicit training phase; it stores all training data ⚠️ - Non-parametric: ⚠️ Makes no assumptions about data distribution ⚠️ - Distance-based: Relies on feature similarity (see below) - Sensitive to scale: Features should be normalized (see below)

[!TIP] Analogy:

"Tell me who your neighbors are, and I'll tell you who you are" - the prediction depends on nearby examples.

Choosing K: Small K (e.g., 1) = sensitive to noise; large K = smoother but may miss local patterns.

Distance Metrics and Feature Similarity¶

"Distance-based" means KNN measures how similar/different data points are using a mathematical distance function.

Common Distance Metrics:

| Metric | Formula | Description |

|---|---|---|

| Euclidean | \(d = \sqrt{\sum_{i=1}^{n}(x_i - y_i)^2}\) | Straight-line distance (most common) |

| Manhattan | \(d = \sum_{i=1}^{n}\|x_i - y_i\|\) | Sum of absolute differences (like walking city blocks) |

| Minkowski | \(d = (\sum_{i=1}^{n}\|x_i - y_i\|^p)^{1/p}\) | Generalization of Euclidean (p=2) and Manhattan (p=1) |

Feature Similarity Example:

| Person | Age | Income | Height |

|---|---|---|---|

| A | 25 | 50k | 170cm |

| B | 27 | 52k | 172cm |

| C | 60 | 120k | 165cm |

- A and B have small differences → small distance → similar

- A and C have large differences → large distance → dissimilar

[!NOTE] KNN assumes similar inputs produce similar outputs. To predict if someone buys a product, KNN looks at the K most similar people and checks what they did.

Why Normalization Matters for KNN¶

KNN uses distance metrics (typically Euclidean) to find neighbors. Without normalization, features with larger scales dominate the distance calculation, which can select completely different neighbors.

Example: Consider two features with different scales:

- age: ranges from 0-100

- income: ranges from 0-100,000

| Point | Age | Income | Age (normalized) | Income (normalized) |

|---|---|---|---|---|

| A | 25 | 50,000 | 0.25 | 0.50 |

| B | 30 | 50,100 | 0.30 | 0.501 |

| C | 65 | 50,000 | 0.65 | 0.50 |

Distance from A without normalization: - Distance(A→B) = √[(30-25)² + (50,100-50,000)²] = √[25 + 10,000] ≈ 100.1 - Distance(A→C) = √[(65-25)² + (50,000-50,000)²] = √[1,600 + 0] = 40 - Result: C is selected as closer (40 < 100.1)

Distance from A with normalization (0-1 scaling): - Distance(A→B) = √[(0.30-0.25)² + (0.501-0.5)²] ≈ 0.05 - Distance(A→C) = √[(0.65-0.25)² + (0.5-0.5)²] = 0.4 - Result: B is selected as closer (0.05 < 0.4)

[!IMPORTANT] Without normalization, a trivial income difference of $100 was treated as more important than a significant age difference of 40 years. Normalization ensures all features contribute proportionally to the distance calculation

Curse of Dimensionality: Why KNN Struggles with High Dimensions¶

In high-dimensional spaces, distance metrics break down - this is called the curse of dimensionality.

Problem 1: Distance Concentration (All Points Become Equidistant)

In high dimensions, all distances converge to a narrow range around \(\sqrt{d}\) (where d = dimensions). This causes the ratio between nearest and farthest points to shrink:

| Dimensions | Nearest Distance | Farthest Distance | Ratio |

|---|---|---|---|

| 2 | 1.0 | 5.0 | 5.0x |

| 10 | 3.1 | 4.2 | 1.35x |

| 100 | 9.5 | 10.2 | 1.07x |

| 1000 | 31.0 | 31.8 | 1.03x |

When nearest ≈ farthest, "nearest" loses meaning.

Problem 2: Data Becomes Sparse

Volume grows exponentially with dimensions: - 2D: ~100 points to cover space (10×10) - 3D: ~1,000 points (10×10×10) - 10D: ~10 billion points (10¹⁰)

Your fixed dataset becomes increasingly sparse, making neighbors unreliable.

Solutions: - Dimensionality reduction: PCA, t-SNE, autoencoders - Feature selection: Keep only relevant features - Different algorithms: Tree-based methods (Random Forest, XGBoost) handle high dimensions better

Why KNN is problematic for recommendations?¶

- High dimensionality - User-item matrices typically have thousands of features (items, user attributes, behavioral signals). As shown in the table above, when dimensions increase:

- At 2 dimensions: nearest vs farthest ratio is 5x (easy to distinguish neighbors)

- At 1000 dimensions: ratio drops to 1.03x (almost no meaningful difference)

- Distance concentration - All users become "equidistant" from each other, making the concept of "K nearest neighbors" nearly meaningless.

- Sparse data - Recommendation data is typically sparse (users rate only a tiny fraction of items), making distance calculations even less reliable.

What's used instead for recommendations: - Matrix Factorization (SVD, ALS) - Reduces dimensionality by learning latent factors - Collaborative Filtering with embeddings - Projects users/items into lower-dimensional dense spaces - Deep learning approaches - Neural networks that learn compressed representations - Approximate Nearest Neighbors (ANN) - If you must use similarity-based methods, use approximate algorithms (FAISS, Annoy, ScaNN) that work in reduced embedding spaces, not raw feature space

So the pattern is: reduce dimensionality first (via embeddings or factorization), then optionally use similarity/neighbor-based methods in that compressed space.

K-means Clustering¶

One-liner: K-means partitions n data points into k clusters by iteratively assigning points to the nearest centroid and updating centroids until convergence.

| Aspect | Description |

|---|---|

| Type | Unsupervised learning (clustering) |

| Data | Continuous features only (use K-modes for categorical) |

| Output | k cluster assignments + k centroid positions |

| Objective | Minimize within-cluster sum of squares (inertia) |

K-means Algorithm Steps¶

| Step | Action | Formula/Detail |

|---|---|---|

| 1 | Initialize k centroids | Random points, or use K-means++ for smarter init |

| 2 | Assign each point to nearest centroid | Use Euclidean distance: \(d(x, c) = \sqrt{\sum(x_i - c_i)^2}\) |

| 3 | Update centroids | New centroid = mean of all points in cluster |

| 4 | Repeat steps 2-3 | Until centroids stop moving (or max iterations) |

Iteration 1: [●] ○ ○ ○ → Assign points to nearest centroid

[●] = centroid

Iteration 2: ○ [●] ○ ○ → Centroid moves to cluster mean

Points reassigned

Convergence: ○ ○ [●] ○ → Centroid stable, algorithm stops

Choosing k (Number of Clusters)¶

| Method | How It Works | When to Use |

|---|---|---|

| Elbow Method | Plot inertia vs k, look for "elbow" where improvement slows | Quick visual check |

| Silhouette Score | Measures how similar points are to own cluster vs others (-1 to +1) | Need a single metric |

| Domain Knowledge | Business context dictates k | Customer segments, product categories |

| Gap Statistic | Compare inertia to random uniform distribution | More rigorous than elbow |

Elbow Method Intuition:

K-means Limitations¶

| Limitation | Problem | Workaround |

|---|---|---|

| Assumes spherical clusters | Struggles with elongated or irregular shapes | Use DBSCAN or Gaussian Mixture Models |

| Sensitive to initialization | Different starting centroids → different results | Use K-means++ or run multiple times |

| Must specify k upfront | Often don't know optimal k | Use elbow/silhouette methods |

| Sensitive to outliers | Outliers pull centroids away | Use K-medoids or remove outliers first |

| Requires scaling | Features with larger scale dominate distance | Always standardize features first |

| Only continuous features | Can't handle categorical directly | Use K-modes or one-hot encode |

Feature Scaling for K-means¶

⚠️ Critical: K-means uses Euclidean distance, so features must be on similar scales!

| Scaler | Formula | When to Use |

|---|---|---|

| Standard Scaler | \(z = \dfrac{x - \mu}{\sigma}\) | Default choice, preserves outliers |

| Min-Max Scaler | \(x' = \dfrac{x - x_{min}}{x_{max} - x_{min}}\) | Need bounded [0,1] range |

Interview Answer Template¶

"How does K-means work?"

"K-means is an unsupervised clustering algorithm that partitions data into k groups. The algorithm works iteratively:

1. Initialize k centroids (randomly or using K-means++) 2. Assign each point to its nearest centroid using Euclidean distance 3. Update each centroid to be the mean of its assigned points 4. Repeat until centroids stabilize

The objective is to minimize within-cluster variance (inertia). Key limitations include: assuming spherical clusters, sensitivity to initialization and outliers, and requiring you to specify k upfront. To choose k, I typically use the elbow method or silhouette score. Also, feature scaling is critical since K-means uses distance."

Decision Trees (DT) a.k.a. Classification and Regression Trees (CART)¶

One-liner: Tree-structured model splitting on features to minimize impurity (Gini or entropy).

The Core Idea¶

A decision tree is like a flowchart of yes/no questions that leads to a prediction. Think of it as playing "20 Questions" to guess something.

- Supervised learning method for both classification and regression

- Nodes = questions about features (e.g., "Is weight > 15?")

- Leaves = final predictions

- Interpretable: Easy to visualize and explain

How Decision Trees Make Predictions¶

Classification: - Prediction = majority class (mode) of training samples in that leaf - Can also return class probabilities (proportion of each class) - If there's a tie: random selection, highest prior probability, or first class encountered

Regression: - Prediction = mean value of training samples in that leaf

Example 1: Classification Tree (Tennis)¶

[Outlook?]

/ | \

Sunny Overcast Rainy

/ | \

[Humidity?] PLAY [Windy?]

/ \ / \

High Normal Yes No

| | | |

DON'T PLAY DON'T PLAY

How it works: 1. Start at the root: "What's the outlook?" 2. Follow the branch matching your answer 3. Either get a prediction (leaf) or another question (node) 4. Repeat until you reach a leaf

Example 2: Classification with Numerical Features¶

Classify animals as "Cat" or "Dog" using weight and height:

Training data: | Weight (kg) | Height (cm) | Animal | |-------------|-------------|--------| | 4 | 25 | Cat | | 5 | 28 | Cat | | 25 | 60 | Dog | | 30 | 65 | Dog | | 8 | 35 | Cat |

The tree might learn:

Why this split? Because weight > 15 perfectly separates cats from dogs in this data.

Training: How to Find the Best Split¶

The algorithm recursively finds the best feature and threshold to split on at each node.

Impurity Measures vs Loss Functions¶